The core promise of Chord: given N nodes in a distributed system, any key can be located in at most O(log N) network hops — without any central directory. Built for CIS 5530 (Networks and Systems) at Penn, based on the Stoica et al. SIGCOMM 2001 paper.

What Chord Solves

Naive distributed caching assigns keys to nodes by hash(key) % N. This breaks whenever a node joins or leaves: nearly every key remaps to a different node, invalidating the entire cache.

Consistent hashing fixes this. Instead of % N, keys and nodes are both mapped onto a ring (a hash space from 0 to 2^m - 1). A key is owned by the first node clockwise from its position on the ring. When a node joins or leaves, only the keys near that position need to move.

Chord formalizes this and adds the finger table: each node maintains O(log N) shortcuts to nodes at exponentially increasing distances around the ring. This cuts lookup from O(N) hops (walking the ring) to O(log N).

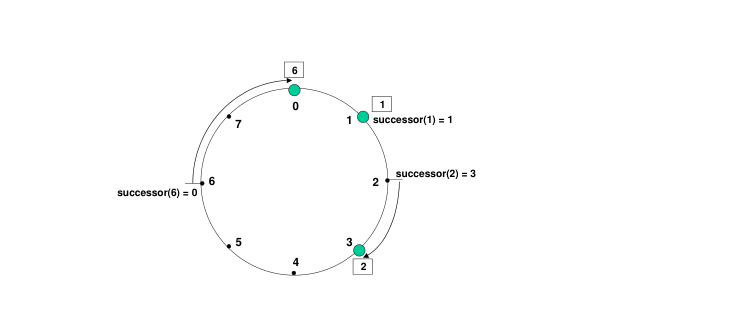

Ring Structure

Each node has a unique ID: SHA-1(ip:port) mod 2^m, where m = 160 for SHA-1. This maps every node to a point on the ring.

The ring above has three nodes (0, 1, 3) on an 8-position space. Key 2 maps to node 3 (first node clockwise). Key 6 maps back to node 0 (wrapping around). Key 1 maps to node 1 exactly.

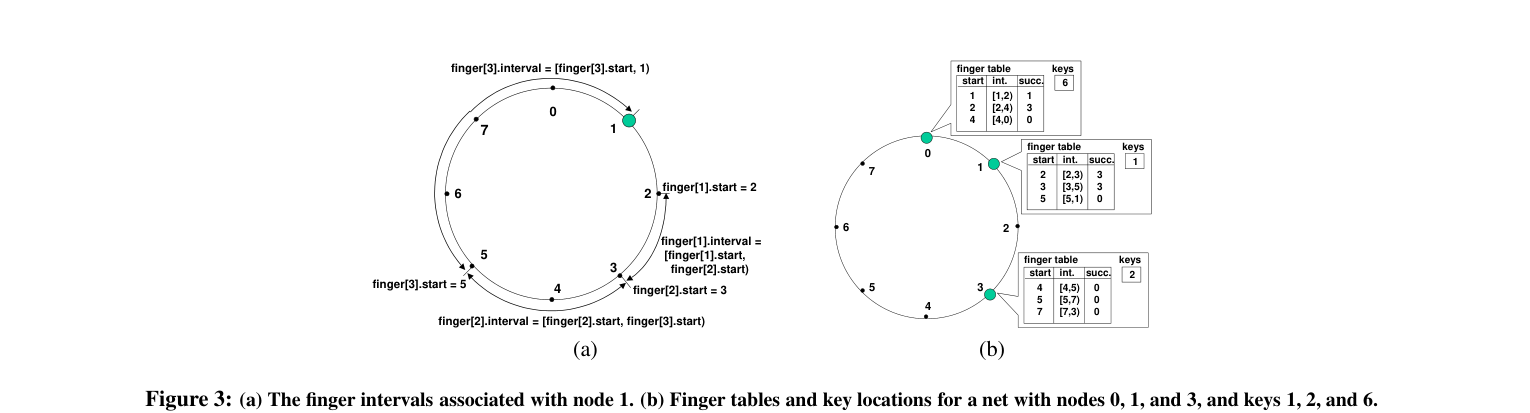

Finger Tables

Each node n maintains a table of m entries:

finger[i] = first node that owns position (n + 2^(i-1)) mod 2^m

For the 3-node ring above, node 1's finger table points to node 3 for the first two fingers (covering positions 2 and 3) and back to node 0 for the third (covering position 5). Each entry roughly doubles the distance covered, which is how O(log N) convergence is achieved.

To look up a key at position k, a node checks its finger table and forwards to the entry whose ID is closest to k without passing it. Each hop halves the remaining distance.

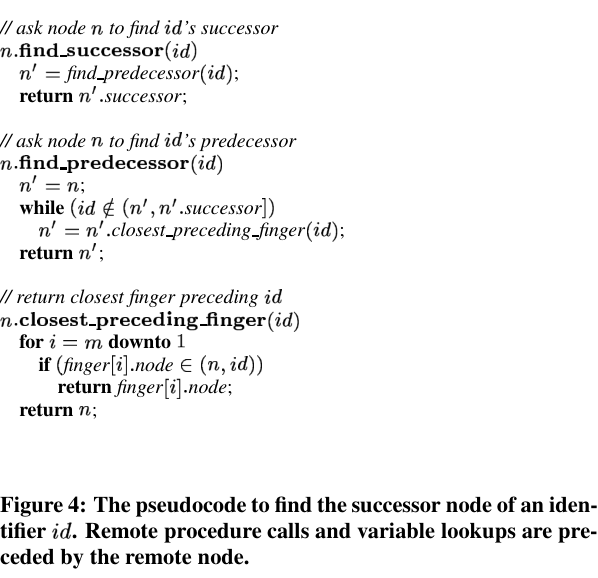

Lookup Pseudocode

The three functions implement the full lookup: find_successor(id) first calls find_predecessor(id) to find the node just before the target, then returns that node's successor. closest_preceding_finger(id) does the actual finger table walk.

Node Join Protocol

When a new node N joins:

- N contacts any known node to find its successor S (first node clockwise from N's ID)

- N sets

predecessor = S.predecessor,successor = S - S updates its predecessor to N

- N copies keys it is now responsible for from S

- Both nodes run

fix_fingers()to update their finger tables over the next stabilize cycle

The stabilize loop runs periodically on every node, checking that its successor's predecessor is still itself and correcting the ring if it isn't. This handles concurrent joins without locking.

Fault Tolerance

Each node maintains a successor list (not just one successor). If successor[0] fails, the node promotes successor[1] and repairs the ring. This tolerates up to k-1 consecutive failures for a successor list of length k.

API

The KV store exposes three operations over the ring:

put(key, value) # store at responsible node

get(key) # retrieve from responsible node

delete(key) # remove from responsible nodeEach call hashes the key to find the responsible node, routes the request via finger table lookups, and executes locally on the owning node. From the caller's perspective, it's a simple dict — the routing is invisible.

What Made This Hard

The subtle part isn't the finger table math — it's timing. Finger tables are eventually consistent. A lookup during a join can route to a stale entry and miss the key. The fix: on a miss, walk the successor chain as a fallback. This adds latency but never returns a false negative for a key that exists.

Implementing this in Python with actual sockets (not simulated calls) forced careful attention to connection handling, timeouts, and partial failures — which is exactly the point of the exercise.